TL;DR

Cerebras Systems is set to increase its IPO size as demand for its AI hardware surges, highlighting a shift towards diverse compute solutions in AI. This development underscores evolving hardware needs for large language models and inference workloads.

Cerebras Systems is planning to increase the size and price of its upcoming IPO, with a new range of $150-$160 per share and 30 million shares offered, up from earlier estimates of $115-$125 and 28 million shares, according to sources cited by Reuters.

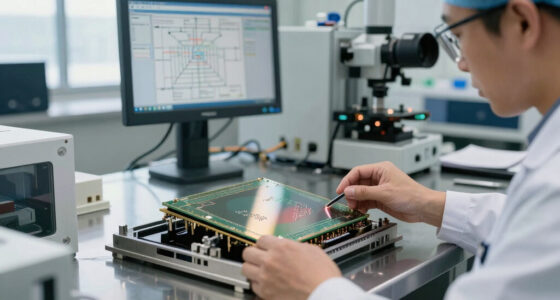

The company’s move comes amid a surge in demand for AI hardware, driven by the need for high-performance compute for large language models and inference tasks. While Nvidia remains dominant in GPU-based training and inference, Cerebras offers a different approach by utilizing wafer-scale chips with extensive on-chip SRAM and extremely high memory bandwidth. This technology allows Cerebras to address the serial, memory-bandwidth-bound steps of inference more efficiently than traditional GPU architectures.

The demand for AI chips is booming, with companies like SpaceX contracting for massive GPU capacities for inference, and AI firms expanding their hardware infrastructure. Cerebras’ wafer-scale architecture aims to complement or challenge GPU solutions, especially for workloads requiring high memory bandwidth and fast access, such as large language model inference. The company’s unique chip design eliminates the need for slower chip-to-chip links, offering a potentially more efficient hardware platform for specific AI tasks.

Why It Matters

This development signals a potential shift in AI hardware investment and architecture, with increased interest in heterogeneous compute solutions beyond GPUs. Cerebras’ IPO expansion reflects broader industry trends towards specialized hardware that can better handle the serial, memory-intensive parts of inference workloads, which are critical for deploying large language models at scale.

For investors and AI developers, this indicates a diversification of hardware options and a recognition that the future of AI compute may involve multiple architectures optimized for different tasks, not just GPUs. The move also underscores the importance of high-bandwidth memory and wafer-scale integration in supporting next-generation AI models.

AI Systems Performance Engineering: Optimizing Model Training and Inference Workloads with GPUs, CUDA, and PyTorch

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Background

Historically, GPUs from Nvidia have dominated AI training and inference, thanks to their programmability, high memory bandwidth, and advanced networking. This has enabled the training of trillion-parameter models and efficient inference for large language models. Companies like SpaceX and Anthropic have leveraged GPU capacity for both training and inference, highlighting the flexibility of GPU-based infrastructure.

However, Cerebras’ wafer-scale chip technology offers a different approach by integrating an entire silicon wafer into a single, massive chip with unparalleled memory bandwidth. Its latest chip, the WSE-3, provides 44GB of SRAM at 21 PB/s bandwidth, vastly exceeding traditional GPU memory bandwidth, although with less total memory. This architecture is designed to address the serial, memory-bound steps of inference more effectively than GPUs, which can be bottlenecked by memory bandwidth limitations.

The company’s IPO plans come amid a broader surge in AI hardware demand, driven by the rapid growth of large language models and the need for efficient inference solutions. While Nvidia continues to lead in GPU-based compute, Cerebras’ approach signals a potential expansion of the hardware landscape, especially for workloads that benefit from high memory bandwidth and low latency.

“Cerebras is considering a new IPO price range of $150-$160 a share, up from $115-$125, and raising the number of shares to 30 million.”

— Reuters source

“Cerebras’ wafer-scale architecture could offer a significant advantage for inference workloads that are memory bandwidth-bound.”

— Industry analyst

CEREBRAS WSE-3: LARGE-SCALE AI TRAINING ON WAFER-SCALE ARCHITECTURE: Build Trillion-Parameter LLMs with Massive On-Chip Memory, Simplified Programming, and Cluster-Scale Performance

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

What Remains Unclear

It is not yet confirmed how the market will respond to Cerebras’ IPO or whether its wafer-scale approach will significantly challenge GPU dominance in AI workloads. Details about the company’s valuation and future product roadmap remain undisclosed.

LLM Inference in C++: Building High-Throughput Engines with PagedAttention and CUDA Kernels (High-Performance C++ Engineering)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

What’s Next

Following the IPO, Cerebras will likely focus on scaling its production and expanding its customer base in AI inference and training markets. Industry analysts will monitor how its wafer-scale chips perform in real-world deployments and whether they gain wider adoption as a complementary or alternative solution to GPUs.

LLM Inference Architecture in Simple Terms : Running Large Language Models: The Complete Guide to Hardware, VRAM, and Inference Optimization

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Key Questions

Why is Cerebras increasing its IPO size now?

Demand for AI hardware, especially for inference workloads, is rapidly growing, prompting Cerebras to raise additional capital to support expansion and capitalize on market interest.

How does Cerebras’ wafer-scale architecture differ from traditional GPUs?

Cerebras’ chips use an entire silicon wafer as a single chip, eliminating the need for slower chip-to-chip links and providing vastly higher memory bandwidth, which benefits memory-bound inference tasks.

Will Cerebras’ technology replace GPUs in AI workloads?

It is unlikely to replace GPUs entirely but may serve as a specialized complement, especially for inference tasks that demand high memory bandwidth and low latency.

What are the risks for Cerebras’ IPO and technology approach?

Market acceptance, execution risks, and competition from established GPU manufacturers like Nvidia pose potential challenges to Cerebras’ growth and valuation.